The Importance of Play in Learning to Program

Programming is both an art and a science. By approaching it through play, learners are encouraged to explore, experiment, and create without the pressure of achieving specific outcomes.

In the journey of learning programming, the importance of structured study and practice is often emphasized. While these elements are crucial, an equally significant yet frequently overlooked aspect is *play*. Incorporating play into learning programming can dramatically enhance creativity, problem-solving skills, and overall engagement.

Encourages Creativity and Exploration

Programming is both an art and a science. By approaching it through play, learners are encouraged to explore, experiment, and create without the pressure of achieving specific outcomes. Play opens up opportunities for learners to test out different ideas, try new techniques, and see how various components of code interact in a low-stakes environment. This freedom to explore fosters creativity, allowing programmers to think outside the box and come up with innovative solutions to problems.

For example, a beginner may start by creating a simple game or a fun animation using a language like Python or JavaScript. Through this playful approach, they can experiment with variables, loops, and functions, learning key programming concepts in an enjoyable and intuitive way. This exploration not only helps solidify foundational skills but also sparks a genuine interest in programming.

Enhances Problem-Solving Skills

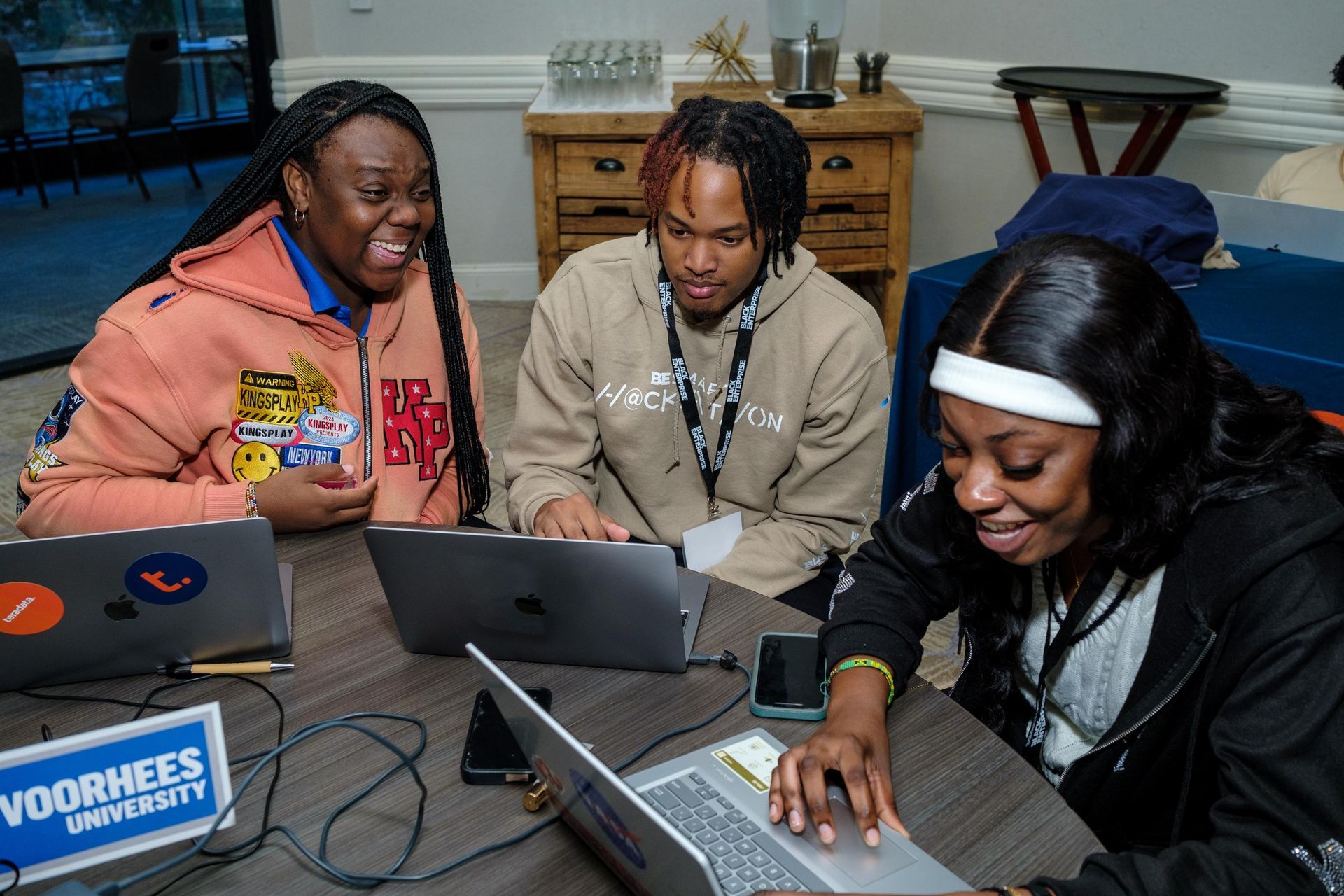

Play inherently involves challenges and obstacles, making it an excellent way to develop problem-solving skills. When learners engage with programming through playful activities—such as puzzles, coding games, or challenges—they are encouraged to think critically and come up with solutions. These activities often present problems in a more engaging and digestible manner, making it easier for learners to grasp complex concepts.

A study conducted by Zosh et al. (2018) highlights the importance of play in learning, noting that playful learning environments enhance cognitive abilities, particularly problem-solving and critical thinking skills . For example, platforms like CodeCombat or Scratch use game-based learning to teach programming. In these environments, learners solve coding puzzles or build interactive stories, which require them to break down problems into smaller parts, debug errors, and iterate on their solutions. This kind of playful problem-solving is not only effective but also enjoyable, helping learners build confidence in their programming abilities.

Increases Motivation and Engagement

One of the biggest challenges in learning programming is maintaining motivation, especially when faced with complex or tedious tasks. Play can serve as a powerful motivator. By integrating fun and playful activities into the learning process, programming becomes less of a chore and more of an enjoyable challenge. This can help learners stay engaged and committed to their coding journey.

Moreover, playful environments often offer immediate feedback, which reinforces learning and provides a sense of accomplishment. Whether it’s seeing a character move on the screen due to their code or successfully completing a coding challenge, these small victories keep learners motivated and excited about their progress.

Conclusion

Incorporating play into learning programming is more than just a fun diversion; it is a powerful educational tool. By encouraging creativity, enhancing problem-solving skills, and increasing motivation, play can transform the programming learning experience. For both beginners and experienced coders alike, integrating playful exploration into the learning process can lead to deeper understanding, sustained engagement, and a lifelong passion for programming.